Table of Contents

- Foreward

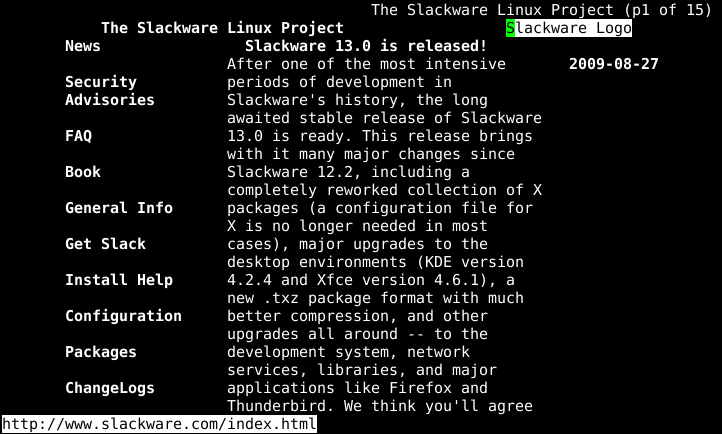

- 1. Introduction to Slackware

- 2. Installation

- 3. Booting

- 4. Basic Shell Commands

- 5. The Bourne Again Shell

- 6. Process Control

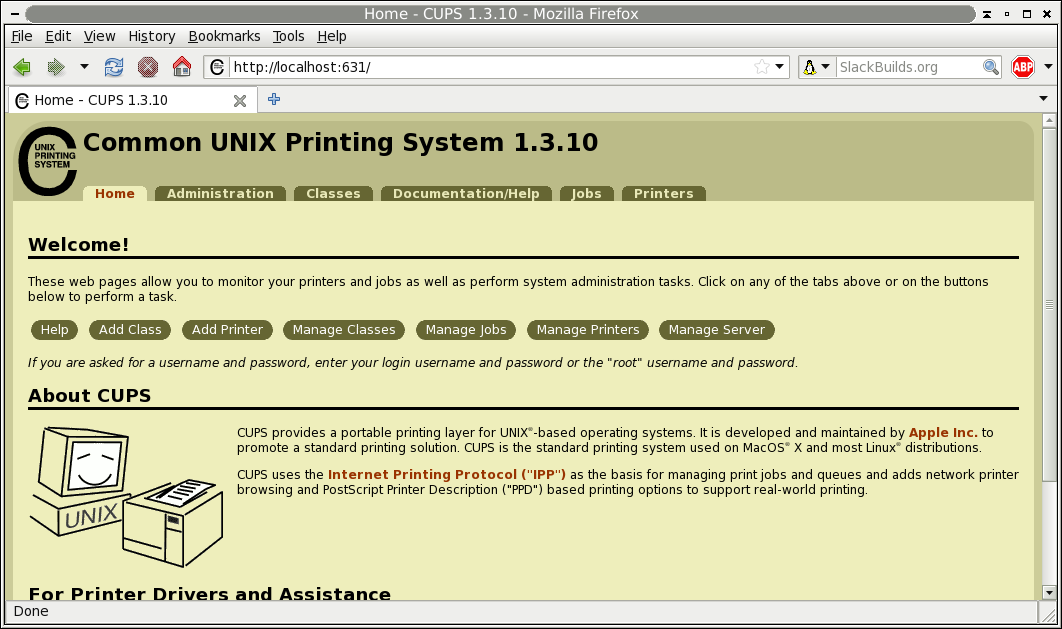

- 7. The X Window System

- 8. Printing

- 9. Users and Groups

- 10. Filesystem Permissions

- 11. Working with Filesystems

- 12. vi

- 13. Emacs

- 14. Networking

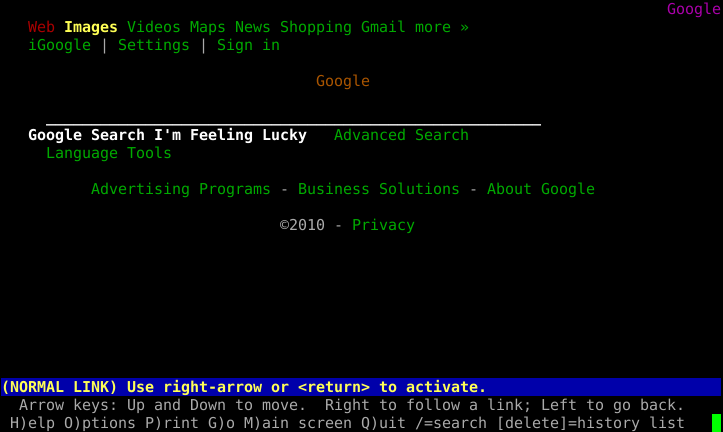

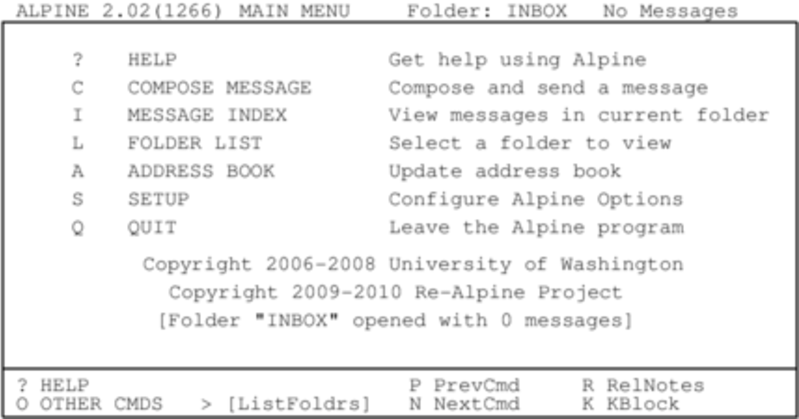

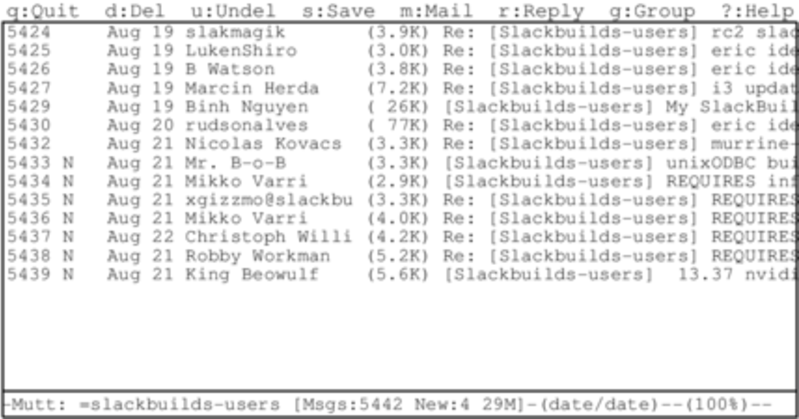

- 15. Wireless Networking

- 16. Basic Networking Utilities

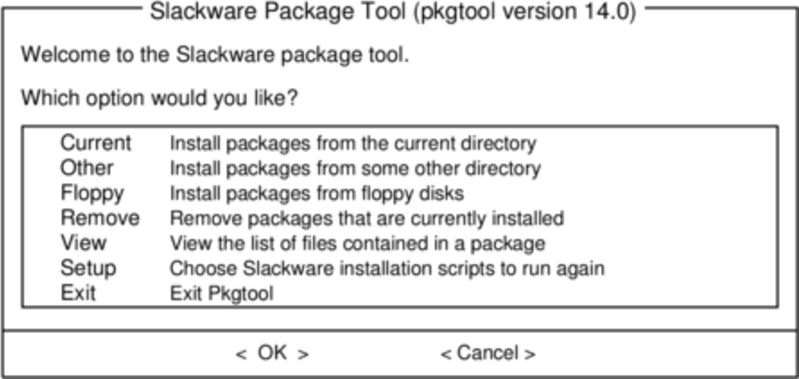

- 17. Package Management

- 18. Keeping Track of Updates

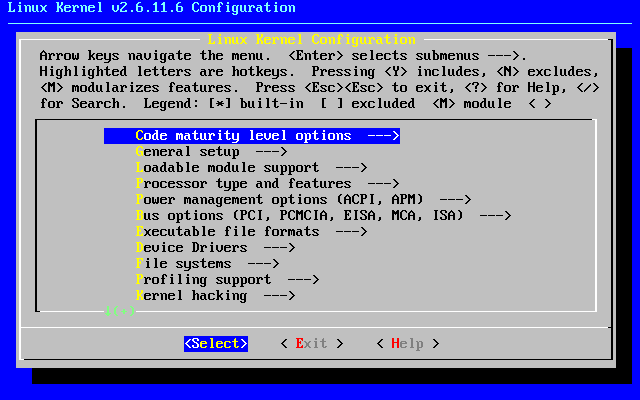

- 19. The Linux Kernel

List of Tables

- 4.1. Man Page Sections

- 4.2. tar Arguments

- 10.1. Permissions of /bin/ls

- 10.2. Octal Permissions

- 10.3. Alphabetic Permissions

- 10.4. Alphabetic Users and Groups

- 10.5. SUID, SGID, and "Sticky" Permissions

- 11.1. Filesystem Layout

- 11.2. Common mount options

- 12.1. vi cursor movement

- 12.2. vi Cheat Sheet

- 13.1. Emacs Cursor Movement

- 13.2. Accessing Emacs Documentation

- 13.3. Emacs Cheat Sheet

- 16.1. rsync Arguments

Table of Contents

Slackware Linux may be one of the oldest surviving Linux distributions but it's still regularly updated and includes the latest releases of many of the most popular free software programs. While Slackware does aim to maintain its traditional UNIX roots and values, there is no escaping "progress". Subsystems change, window managers come and go and new ways are devised to manage the complexities of a modern operating system. While we do resist change for change's sake, it's inevitable that as things evolve documentation becomes stale — books are no exception.

Lorem ipsum dolor sit amet, consectetur adipisicing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Lorem ipsum dolor sit amet, consectetur adipisicing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Slackware has a long tradition of excellence. Started in 1992 and first released in 1993, Slackware is the oldest surviving commercial Linux distribution. Slackware's focus on making a clean, simple Linux distribution that is as UNIX-like as possible makes it a natural choice for those people who really want to learn about Linux and other UNIX-like operating systems. In a 2012 interview, Slackware founder and benevolent dictator for life, Patrick Volkerding, put it thusly.

"I try not to let things get juggled around simply for the sake of making them different. People who come back to Slackware after a time tend to be pleasantly surprised that they don't need to relearn how to do everything. This has given us quite a loyal following, for which I am grateful."

Slackware's simplicity makes it ideal for those users who want to create their own custom systems. Of course, Slackware is great in its own right as a desktop, workstation, or server as well.

There are a great number of differences between Slackware and other mainstream distributions such as Red Hat, Debian, and Ubuntu. Perhaps the greatest difference is the lack of "hand-holding" that Slackware will do for the administrator. Many of those other distributions ship with custom graphical configuration tools for all manner of services. In many cases, these configuration tools are the preferred method of setting up applications on these systems and will overwrite any changes you make to the configuration files via other means. These tools often make it easy (or at least possible) for a rookie with no in-depth understanding of his system to setup basic services; however, they also make it difficult to do anything too out of the ordinary. In contrast, Slackware expects you, the system administrator, to do these tasks on your own. Slackware provides no general purpose setup tools beyond those included with the source code published by upstream developers. This means there is often a somewhat steeper learning curve associated with Slackware, even for those users familiar with other Linux distributions, but also makes it much easier to do whatever you want with your operating system.

Also, you may hear users of other distributions say that Slackware has no package management system. This is completely and obviously false. Slackware has always had package management (see Chapter 17, Package Management for more information). What it does not have is automatic dependency resolution - Slackware's package tools trade dependency management for simplicity, ease-of-use, and reliability.

Each piece of Slackware (this is true of all Linux distributions) is developed by different people (or teams of people), and each group has their own ideas about what it means to be "free". Because of this, there are literally dozens and dozens of different licenses granting you different permissions regarding their use or distribution. Fortunately dealing with free software licenses isn't as difficult as it may first appear. Most things are licensed with either the Gnu General Public License or the BSD license. Sometimes you'll encounter a piece of software with a different license, but in almost all cases they are remarkably similar to either the GPL or the BSD license.

Probably the most popular license in use within the Free Software community is the GNU General Public License. The GPL was created by the Free Software Foundation, which actively works to create and distribute software that guarantees the freedoms which they believe are basic rights. In fact, this is the very group that coined the term "Free Software." The GPL imposes no restrictions on the use of software. In fact, you don't even have to accept the terms of the license in order to use the software, but you are not allowed to redistribute the software or any changes to it without abiding by the terms of the license agreement. A large number of software projects shipped with Slackware, from the Linux kernel itself to the Samba project, are released under the terms of the GPL.

Another very common license is the BSD license, which is arguably "more free" than the GPL because it imposes virtually no restrictions on derivative works. The BSD license simply requires that the copyright remain intact along with a simple disclaimer. Many of the utilities specific to Slackware are licensed with a BSD-style license, and this is the preferred license for many smaller projects and tools.

Table of Contents

Slackware's installation is a bit more simplistic than that of most other Linux distributions and is very reminiscent of installing one of the varieties of BSD operating systems. If you're familiar with those, you should feel right at home. If you've never installed Slackware or have only used distributions that make use of graphical installers, you may feel a bit overwhelmed at first. Don't panic! The installation is very easy once you understand it, and it works on just about any x86 or x86_64 platform.

The latest versions of Slackware Linux are distributed on DVD or CD

media, but Slackware can be installed in a variety of other ways. We're

only going to focus on the most common method - booting from a DVD - in

this book. If you don't have a CD or DVD drive, you might wish to take

a look at the various README files inside the

usb-and-pxe-installers directory at your favorite

Slackware mirror. This directory includes the necessary files and

instructions for booting the Slackware installer from a USB flash drive

or from a network card that support PXE. The files there are the best

source of information available for such boot methods.

Booting the installer is simply a process of inserting the Slackware install disk into your CD or DVD drive and rebooting. You may have to enter your computer's BIOS and alter the boot order to place the optical drive at a higher boot priority than your hard drives. Some computers allow you to change the boot order on the fly by pressing a specific function key during system boot-up. Since every computer is different, we can't offer instructions on how to do this, but the method is simple on nearly all machines.

Once your computer boots from the CD you'll be taken to a screen that allows you to enter any special kernel parameters. This is here primarily to allow you to use the installer as a sort of rescue disk. Some systems may need special kernel parameters in order to boot, but these are very rare exceptions to the norm. Most users can simply press enter to let the kernel boot.

Welcome to Slackware version 14.0 (Linux kernel 3.2.27)! If you need to pass extra parameters to the kernel, enter them at the prompt below after the name of the kernel to boot (huge.s etc). In a pinch, you can boot your system from here with a command like: boot: huge.s root=/dev/sda1 rdinit= ro In the example above, /dev/sda1 is the / Linux partition. To test your memory with memtest86+, enter memtest on the boot line below. This prompt is just for entering extra parameters. If you don't need to enter any parameters, hit ENTER to boot the default kernel "huge.s" or press [F2] for a listing of more kernel choices.

After pressing ENTER you should see a lot of text go flying across your screen. Don't be alarmed, this is all perfectly normal. The text you see is generated by the kernel during boot-up as it discovers your hardware and prepares to load the operating system (in this case, the installer). You can later read these messages with the dmesg(1) command if you're interested. Often these messages are very important for troubleshooting any hardware problems you may have. Once the kernel has completed its hardware discovery, the messages should stop and you'll be given an option to load support for non-us keyboards.

<OPTION TO LOAD SUPPORT FOR NON-US KEYBOARD> If you are not using a US keyboard, you may need to load a different keyboard map. To select a different keyboard map, please enter 1 now. To continue using the US map, just hit enter. Enter 1 to select a keyboard map: _

Entering 1 and pressing ENTER will give you a list of keyboard mappings. Simply select the mapping that matches your keyboard type and continue on.

Welcome to the Slackware Linux installation disk! (version 14.0)

###### IMPORTANT! READ THE INFORMATION BELOW CAREFULLY. ######

- You will need one or more partitions of type 'Linux' prepared. It is also

recommended that you create a swap partition (type 'Linux swap') prior

to installation. For more information, run 'setup' and read the help file.

- If you're having problems that you think might be related to low memory, you

can try activating a swap partition before you run setup. After making a

swap partition (type 82) with cfdisk or fdisk, activate it like this:

mkswap /dev/<partition> ; swapon /dev/<partition>

- Once you have prepared the disk partitions for Linux, type 'setup' to begin

the installation process.

- If you do not have a color monitor, type: TERM=vt100

before you start 'setup'.

You may now login as 'root'.

slackware login: root

Unlike other Linux distributions which boot you directly into a

dedicated installer program, Slackware's installer places you in a

limited Linux distribution loaded into your system's RAM. This

limited distribution is then used to run all the installation programs

manually, or can be used in emergencies to fix a broken system that

fails to boot. Now that you're logged in as root (there is no password

within the installer) it's time to start setting up your disks. At this

point, you may setup software RAID or LVM support if you wish or even

an encrypted root partition, but

those topics are outside of the scope of this book. I encourage you to

refer to the excellent README_RAID.TXT,

README_LVM.TXT, and

README_CRYPT.TXT files on your CD if you desire to

setup your system with these advanced tools. Most users won't have any

need to do so and should proceed directly to partitioning.

Unlike many other Linux distributions, Slackware does not make use of a dedicated graphical disk partitioning tool in its installer. Rather, Slackware makes use of the traditional Linux partitioning tools, the very same tools that you will have available once you've installed Slackware. Traditionally, partitioning is performed with either fdisk(8) or cfdisk(8), both of which are console tools. cfdisk is preferred by many people because it is curses menu-based, but either works well. Additionally, Slackware includes sfdisk(8) and gdisk(8). These are more powerful command-line partitioning tools. gdisk is required to alter GUID partition tables found on some of today's larger hard drives. In this book, we're going to focus on using fdisk, but the other tools are similar. You can find additional instructions for using these other tools online or in their man pages.

In order to partition your hard drive, you'll first need to know how to

identify it. In Linux, all hardware is identified by a special file

called a device file. These are (typically) located in the

/dev directory. Nearly all hard drives today,

are identified as SCSI hard drives by

the kernel, and as such, they'll be assigned a device node such as

/dev/sda. (Once upon a time each hard drive type

had its own unique identifier such as /dev/hda for the first IDE drive.

Over the years the kernel's SCSI subsystem morphed into a generic drive

access system and came to be used for all hard disks and optical drives

no matter how they are connected to your computer. If you think this is

confusing, imagine what it would be like if you had a system with a

SCSI hard drive, a SATA CD-ROM, and a USB memory stick, all with

unique subsystem indentifiers. The current system is not only cleaner,

but performs better as well.)

If you don't know which device node is assigned to your hard drive, fdisk can help you find out.

root@slackware:/#fdisk -lDisk /dev/sda: 72.7 GB, 72725037056 bytes 255 heads, 63 sectors/track, 8841 cylinders Units = cylinders of 16065 * 512 = 8225280 bytes

Here, you can see that my system has a hard drive located at

/dev/sda that is 72.7 GB in size. You can also

see some additional information about this hard drive.

The [-l] argument to

fdisk tells it to display the hard drives

and all the partitions it finds on those drives, but it won't make any

changes to the disks. In order to actually partition our drives, we'll

have to tell fdisk the drive on which to operate.

root@slackware:/#fdisk /dev/sdaThe number of cylinders for this disk is set to 8841. There is nothing wrong with that, but this is larger than 1024, and could in certain setups cause problems with: 1) software that runs at boot time (e.g., old versions of LILO) 2) booting and partitioning software from other OSs (e.g., DOS FDISK, OS/2 FDISK) Command (m for help):

Now we've told fdisk what disk we wish to partition, and it has dropped us into command mode after printing an annoying warning message. The 1024 cylinder limit has not been a problem for quite some time, and Slackware's boot loader will have no trouble booting disks larger than this. Typing [m] and pressing ENTER will print out a helpful message telling you what to do with fdisk.

Command (m for help): m

Command action

a toggle a bootable flag

b edit bsd disklabel

c toggle the dos compatibility flag

d delete a partition

l list known partition types

m print this menu

n add a new partition

o create a new empty DOS partition table

p print the partition table

q quit without saving changes

s create a new empty Sun disklabel

t change a partition's system id

u change display/entry units

v verify the partition table

w write table to disk and exit

x extra functionality (experts only)

Now that we know what commands will do what, it's time to begin partitioning

our drive. At a minimum, you will need a single /

partition, and you should also create a swap partition.

You might also want to make a separate /home

partition for storing user files (this will make it easier to upgrade

later or to install a different Linux operating system by keeping all of

your users' files on a separate partition). Therefore, let's go ahead and

make three partitions. The command to create a new partition is

[n] (which you noticed when you read the help).

Command: (m for help):nCommand action e extended p primary partition (1-4)pPartition number (1-4):1First cylinder (1-8841, default 1):1Last cylinder or +size or +sizeM or +sizeK (1-8841, default 8841):+8GCommand (m for help): n Command action e extended p primary partition (1-4)pPartition number (1-4):2First cylinder (975-8841, default 975):975Last cylinder or +size or +sizeM or +sizeK (975-8841, default 8841):+1G

Here we have created two partitions. The first is 8GB in size, and the second is only 1GB. We can view our existing partitions with the [p] command.

Command (m for help): p

Disk /dev/sda: 72.7 GB, 72725037056 bytes

255 heads, 63 sectors/track, 8841 cylinders

Units = cylinders of 16065 * 512 = 8225280 bytes

Device Boot Start End Blocks Id System

/dev/sda1 1 974 7823623+ 83 Linux

/dev/sda2 975 1097 987997+ 83 Linux

Both of these partitions are of type "83" which is the standard Linux

filesystem. We will need to change /dev/sda2 to

type "82" in order to make this a swap partition. We will do this with

the [t] argument to fdisk.

Command (m for help):tPartition number (1-4):2Hex code (type L to list codes):82Command (me for help):pDisk /dev/sda: 72.7 GB, 72725037056 bytes 255 heads, 63 sectors/track, 8841 cylinders Units = cylinders of 16065 * 512 = 8225280 bytes Device Boot Start End Blocks Id System /dev/sda1 1 974 7823623+ 83 Linux /dev/sda2 975 1097 987997+ 82 Linux swap

The swap partition is a special partition that is used for virtual memory by the Linux kernel. If for some reason you run out of RAM, the kernel will move the contents of some of the RAM to swap in order to prevent a crash. The size of your swap partition is up to you. A great many people have participated in a great many flamewars on the size of swap partitions, but a good rule of thumb is to make your swap partition about twice the size of your system's RAM. Since my machine has only 512MB of RAM, I decided to make my swap partition 1GB. You may wish to experiment with your swap partition's size and see what works best for you, but generally there is no harm in having "too much" swap. If you plan to use hibernation (suspend to disk), you will need to have at least as much swap space as you have physical memory (RAM), so keep that in mind.

At this point we can stop, write these changes to the disk, and

continue on, but I'm going to go ahead and make a third partition which

will be mounted at /home.

Command: (me for help):nCommand action e extended p primary partition (1-4)pPartition number (1-4):3First cylinder (1098-8841, default 1098):1098Last cylinder or +size or +sizeM or +sizeK (1098-8841, default 8841):8841

Now it's time to finish up and write these changes to disk.

Command: (me for help):wThe partition table has been altered! Calling ioctl() to re-read partition table. Syncing disks.root@slackware:/#

At this point, we are done partitioning our disks and are ready to begin the setup program. However, if you have created any extended partitions, you may wish to reboot once to ensure that they are properly read by the kernel.

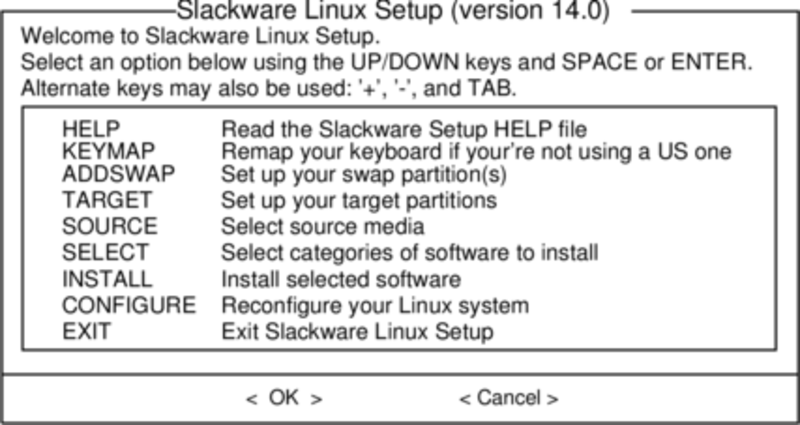

Now that you've created your partitions it's time to run the setup program to install Slackware. setup will handle formatting partitions, installing packages, and running basic configuration scripts step-by-step. In order to do so, just type setup at your shell prompt.

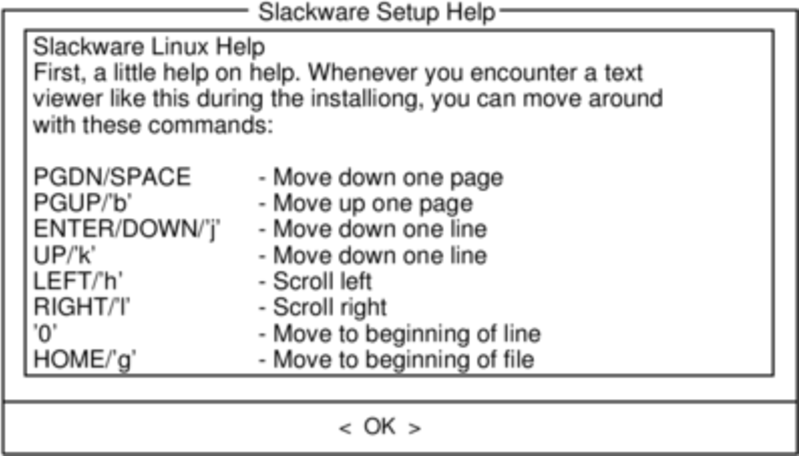

If you've never installed Slackware before, you can get a very basic over-view of the Slackware installer by reading the Help menu. Most of the information here is on navigating through the installer which should be fairly intuitive, but if you've never used a curses-based program before you may find this useful.

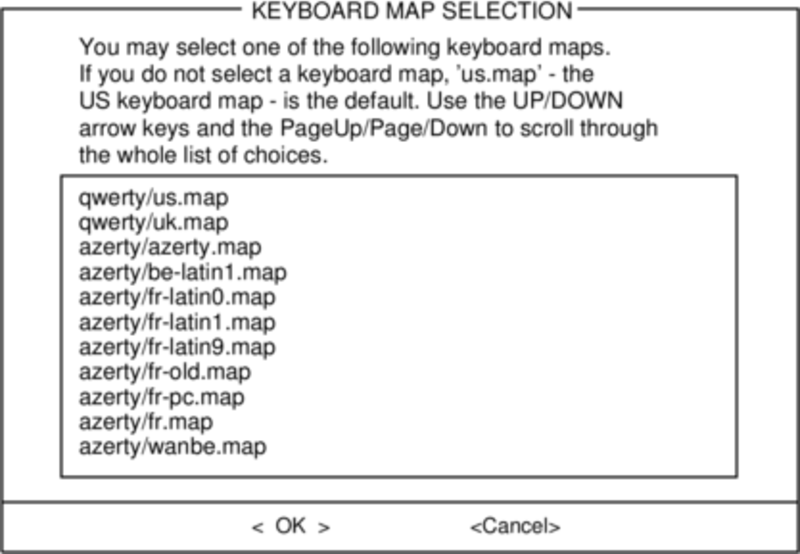

Before we go any further, Slackware gives you the opportunity to select a different mapping for your keyboard. If you're using a standard US keyboard you can safely skip this step, but if you're using an international keyboard you will want to select the correct mapping now. This ensures that the keys you press on your keyboard will do exactly what you expect them to do.

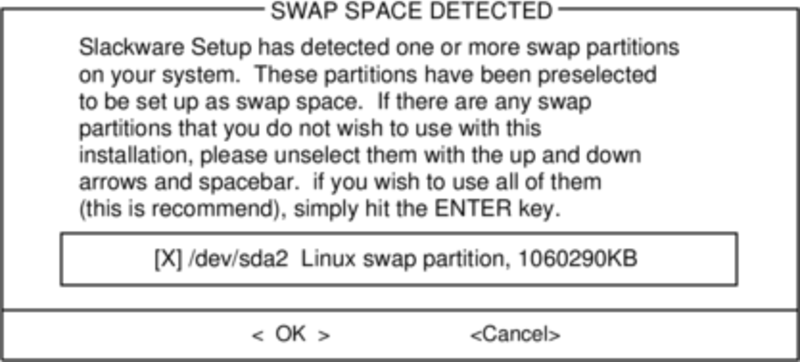

If you created a swap partition, this step will allow you to enable

it before running any memory-intensive activities like installing

packages. swap space is essentially virtual memory. It's a hard drive

partition (or a file, though Slackware's installer does not support

swap files) where regions of active system memory get copied when

your computer is out of useable RAM. This lets the computer "swap"

programs in and out of active RAM, allowing you to use more memory

than your computer actually has. This step will also add your swap

partition to /etc/fstab so it will be available

to your OS.

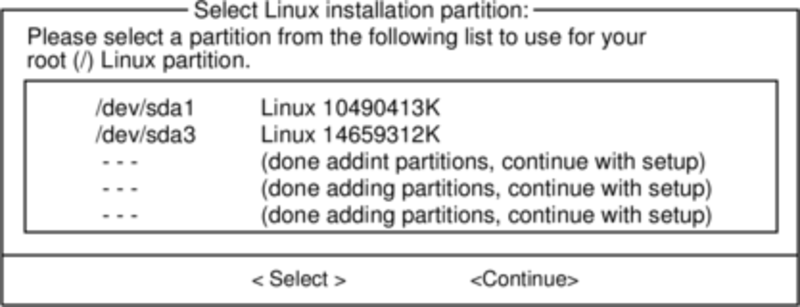

Our next step is selecting our root partition and any other

partitions we'd like Slackware to utilize. You'll be given a choice

of filesystems to use and whether or not to format the partition. If

you're installing to a new partition you must format it. If you have

a partition with data on it you'd like to save, don't. For example,

many users have a seperate /home partition used

for user data and elect not to format it on install. This lets them

install newer versions of Slackware without having to backup and

restore this data.

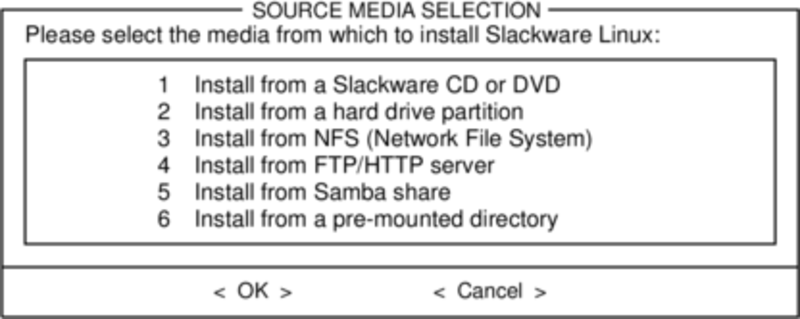

Here you'll tell the installer where to find the Slackware packages. The most common method is to use the Slackware install DVD or CDs, but various other options are available. If you have your packages installed to a partition that you setup in the previous step, you can install from that partition or a pre-mounted directory. (You may need to mount that partition with mount(8) first. See chapter 11 for more details.) Additionally, Slackware offers a variety of networked options such as NFS shares, FTP, HTTP, and Samba. If you select a network installation, Slackware will prompt you for TCP/IP information first. We're only going to discuss installation from the DVD, but other methods are similar and straightforward.

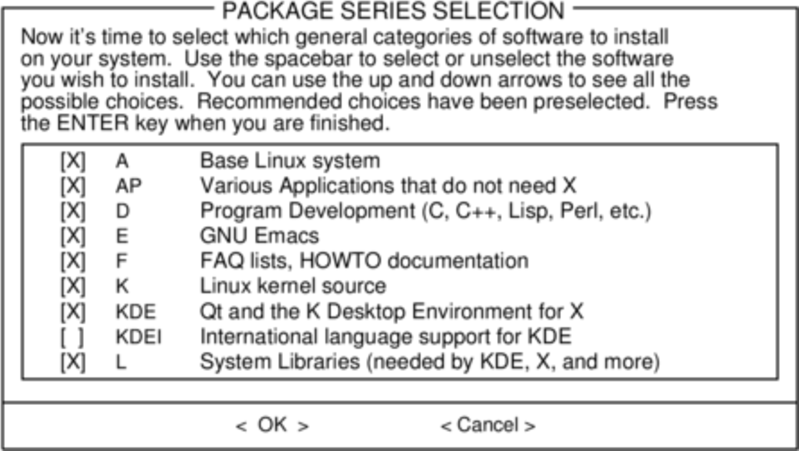

One unique feature of Slackware is its manner of dividing packages into disksets. At the beginning of time, network access to FTP servers was available only through incredibly slow 300 baud modems, so Slackware was split into disk sets that would fit onto floppy disks so users could download and install only those packages they were interested in. Today that practice continues and the installer allows you to chose which sets to install. This allows you to easily skip packages you may not want, such as X and KDE on headless servers or Emacs on everything. Please note that the "A" series is always required.

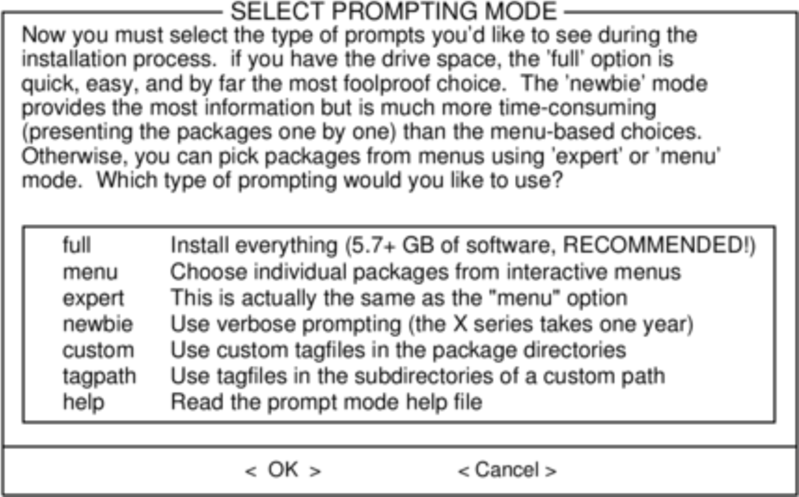

Finally we get to the meat of the installer. At this stage, Slackware will ask you what method to use to chose packages. If this is your first time installing Slackware, the "full" method is highly recommended. Even if this isn't your first time, you'll probably want to use it anyway.

The "menu" and "expert" options allow you to choose individual packages to install and are of use to skilled users familiar with the OS. These methods allow such users to quickly prune packages from the installer to build a very minimal system. If you don't know what you're doing (sometimes even if you do) you're likely to leave out crucial pieces of software and end up with a broken system.

The "newbie" method can be very helpful to a new user, but takes a very long time to install. This method will install all the required packages, then prompt you individually for every other package. The big advantage here is that is pauses and gives you a brief overview of the package contents. For a new user, this introduction into what is included with Slackware can be informative. For most other users it is a long and tedious process.

The "custom" and "tagpath" options should only be used by people with the greatest skill and expertise with Slackware. These methods allow the user to install packages from custom tagfiles. Tagfiles are only rarely used. We won't discuss them in this book.

Once all the packages are installed you're nearly finished. At this stage, Slackware will prompt you with a variety of configuration tasks for your new operating system. Many of these are optional, but most users will need to set something up here. Depending on the packages you've installed, you may be offered different configuration options than the ones shown here, but we've included all the really important ones.

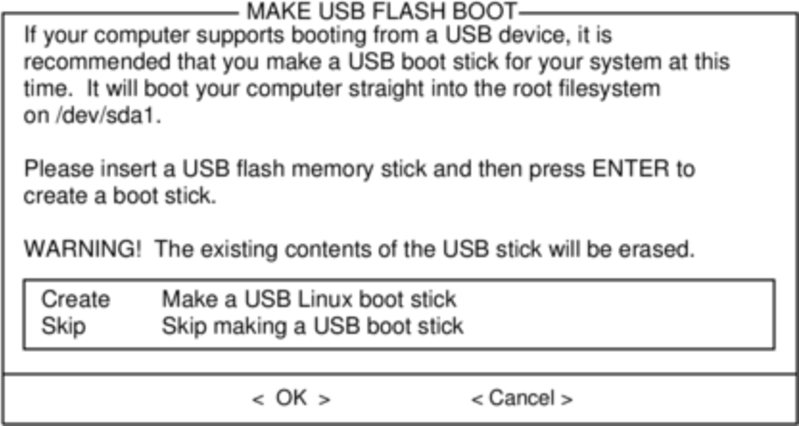

The first thing you'll likely be prompted to do is setup a boot disk. In the past this was typically a 1.44MB floppy disk, but today's Linux kernel is far too large to fit on a single floppy, so Slackware offers to create a bootable USB flash memory stick. Of course, your computer must support booting from USB in order to use a USB boot stick (most modern computers do). If you do not intend to use LILO or another traditional boot loader, you should consider making a USB boot stick. Please note that doing so will erase the contents of whatever memory stick you're using, so be careful.

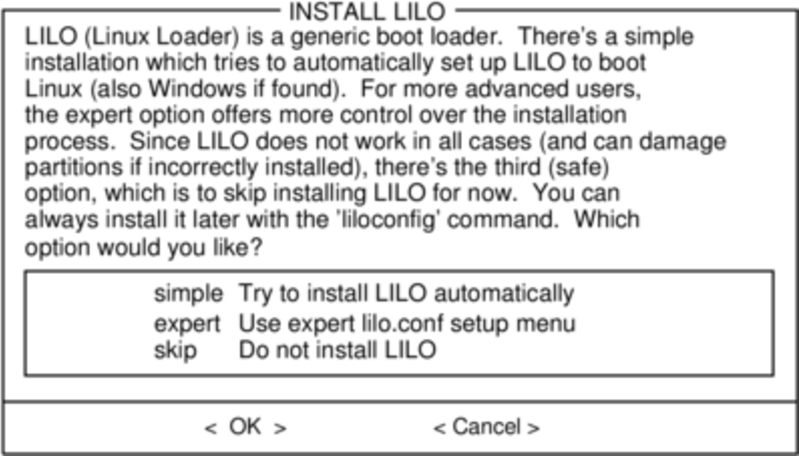

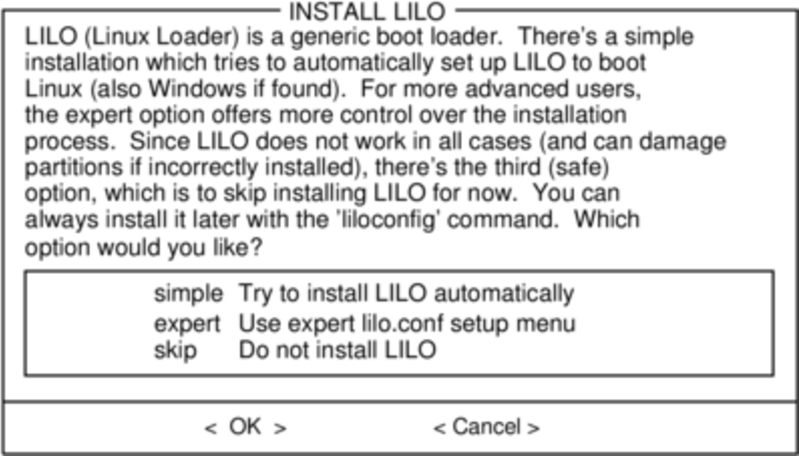

Nearly everyone will need to setup the LInux LOader, LILO. LILO is in charge of booting the Linux kernel and connecting to an initrd or the root filesystem. Without it (or some other boot loader), your new Slackware operating system will not boot. Slackware offers a few options here. The "simple" method attempts to automatically configure LILO for your computer, and works well with very simple systems. If Slackware is the only operating system on your computer, it should configure and install LILO for you without any hassels. If you don't trust the simpler method to work, or if you want to take an in-depth look at how to configure LILO, the "expert" method is really not all that complicated. This method will take you through each step and offer to setup dual-boot for Windows and other Linux operating systems. It also allows you to append kernel command parameters (most users will not need to specify any though).

LILO is a very important part of your Slackware system, so an entire section of the next chapter is devoted to it. If you're having difficulty configuring LILO at this stage, you may want to skip ahead and read Chapter 3 first, then return here.

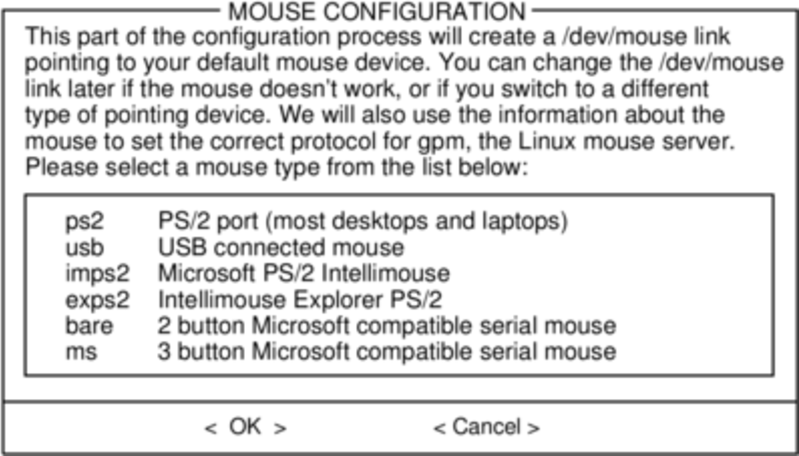

This simple step allows you to configure and activate a console mouse for use outside of the graphical desktops. By activating a console mouse, you'll be able to easily copy and paste from within the Slackware terminal. Most users will need to choose one of the first three options, but many are offered, and yes those ancient two-button serial mice do work.

The next stage in configuring your install is the network configuration. If you don't wish to configure your network at this stage, you may decline, but otherwise you'll be prompted to provide a hostname for your computer. If you're unsure what to do here, you might want to read through Chapter 14, Networking first.

The following screens will prompt you first for a hostname, then

for a domainname, such as

example.org. The combination of the hostname and the domainname

can be used to navigate between computers in your network if you

use an internal DNS service or maintain your

/etc/hosts file. If you skip setting

up your network, Slackware will name your computer "darkstar" after

a song by the Grateful Dead.

You have three options when setting your IP address; you may assign it a static IP, use DHCP, or configure a loopback connection. The simplest option, and probably the most common for laptops or computers on a basic network, is to let a DHCP server assign IP addresses dynamically. Unless you are installing Slackware for use as a network server, you probably do not need to setup a static IP address. If you're not sure which of these options to choose, pick DHCP.

Rarely DHCP servers requires you specify a DHCP hostname before you're permitted to connect. You can enter this on the Set DHCP Hostname screen. This is almost always be the same hostname you entered earlier.

If you choose to set a static IP address, Slackware will ask you to enter it along with the netmask, gateway IP address, and what nameserver to use.

The final screen during static IP address configuration is a confirmation screen, where you're permitted to accept your choices, edit them, or even restart the IP address configuration in case you decide to use DHCP instead.

Once your network configuration is completed Slackware will prompt you to configure the startup services that you wish to run automatically upon boot. Helpful descriptions of each service appear both to the right of the service name as well as at the bottom of the screen. If you're not sure what to turn on, you can safely leave the defaults in place. What services are started at boot time can be easily modified later with pkgtool.

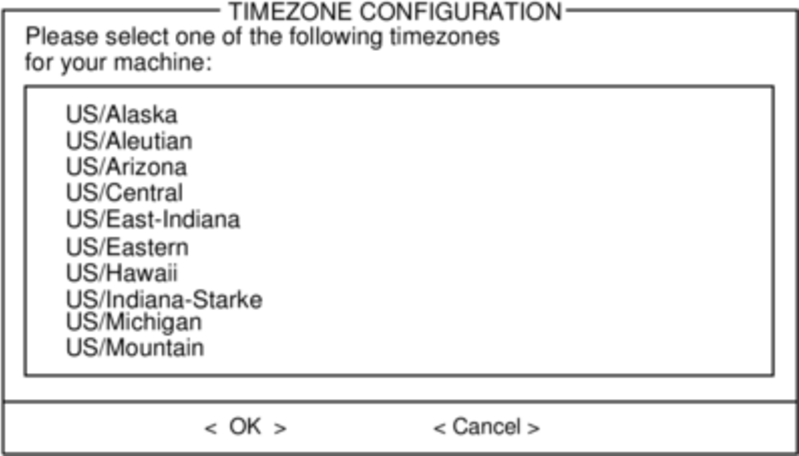

Every computer needs to keep track of the current time, and with so many timezones around the world you have to tell Slackware which one to use. If your computer's hardware clock is set to UTC (Coordinated Universal Time), you'll need to select that; most hardware clocks are not set to UTC from the factory (though you could set it that way on your own; Slackware doesn't care). Then simply select your timezone from the list provided and off you go.

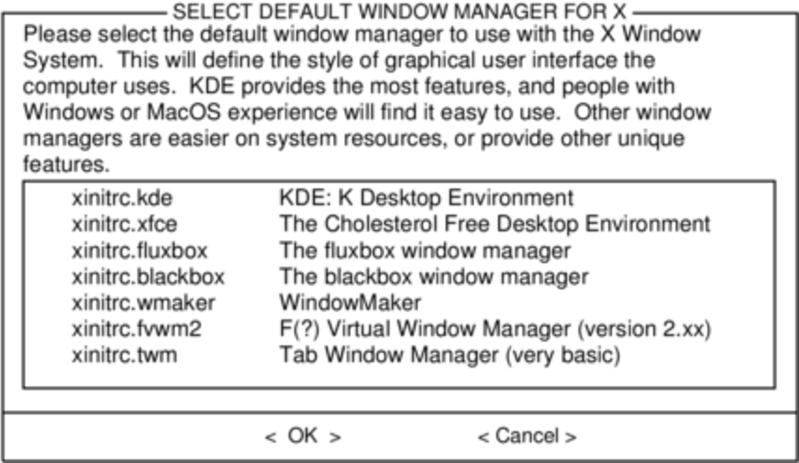

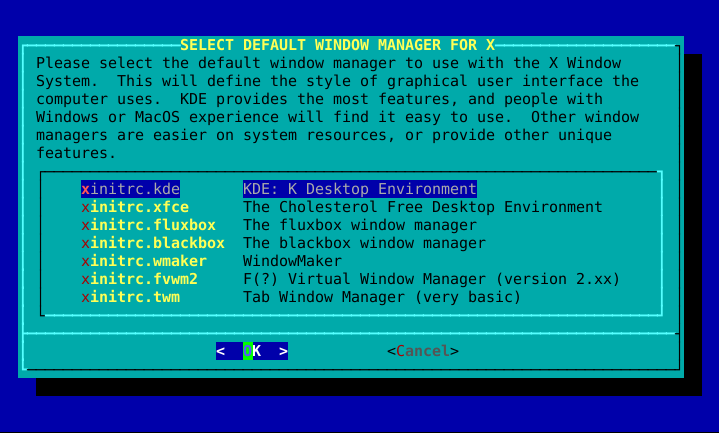

If you installed the X disk set, you'll be prompted to select a default window manager or desktop environment. What you select here will apply to every user on your computer, unless that user decides to run xwmconfig(1) and choose a different one. Don't be alarmed if the options you see below do not match the ones Slackware offers you. xwmconfig only offers choices that you installed. So for example, if you elected to skip the "KDE" disk set, KDE will not be offered.

The last configuration step is setting a root password. The root user is the "super user" on Slackware and all other UNIX-like operating systems. Think of root as the Administrator user. root knows all, sees all, and can do all, so setting a strong root password is just common sense.

With this last step complete, you can now exit the Slackware installer and reboot with a good old CTRL + ALT + DELETE. Remove the Slackware installation disk, and if you performed all the steps correctly, your computer will boot into your new Slackware linux system. If something went wrong, you probably skipped the LILO configuration step or made an error there somehow. Thankfully, the next chapter should help you sort that out.

When you have rebooted into your new Slackware installation, the very first step you should take is to create a user. By default, the only user that exists after the install is the root user, and it's dangerous to use your computer as root, given that there are no restrictions as to what that user can do.

The quickest and easiest way to create a normal user for yourself is to log in as root with the root password that you created at the end of the intallation process, and then issue the adduser command. This will interactively assist you in creating a user; see the section called “Managing Users and Groups” for more information.

Ok, now that you've gotten Slackware installed on your system, you should learn exactly what controls the boot sequence of your machine, and how to fix it should you manage to break it somehow. If you use Linux long enough, sooner or later you will make a mistake that breaks your bootloader. Fortunately, this doesn't require a reinstall to fix. Unlike many other operating systems that hide the underlying details of how they work, Linux (and in particular, Slackware) gives you full control over the boot process. Simply by editing a configuration file or two and re-running the bootloader installer, you can quickly and easily change (or break) your system. Slackware even makes it easy to dual-boot multiple operating systems, such as other Linux distributions or Microsoft Windows.

Before we go any further, a quick discussion on the Linux kernel is warranted. Slackware Linux includes at least two, but sometimes more, different kernels. While they are all compiled from the same source code, and hence are the "same", they are not identical. Depending on your architecture and Slackware version, the installer may have loaded your system with several kernels. There are kernels for single-processor systems and kernels for multi-processor systems (on 32bit Slackware). In the old days, there were lots of kernels for installing on many different kinds of hard drive controllers. More importantly for our discussion, there are "huge" kernels and "generic" kernels.

If you look inside your /boot directory, you'll

see the various kernels installed on your system.

darkstar:~#ls -1 /boot/vmlinuz*/boot/vmlinuz-huge-2.6.29.4 /boot/vmlinuz-generic-2.6.29.4

Here you can see that I have two kernels installed,

vmlinuz-huge-2.6.29.4 and

vmlinuz-generic-2.6.29.4. Each Slackware release

includes different kernel versions and sometimes even slightly

different names, so don't be alarmed if what you see doesn't exactly

match what I have listed here.

Huge kernels are exactly what you might think; they're huge. However, that does NOT mean that they have all of the possible drivers and such compiled into them. Instead, these kernels are made to boot (and run) on every conceivable computer on which Slackware is supported (there may very well be a few out there that won't boot/work with them though). They most certainly contain support for hardware your machine does not (and never will) have, but that shouldn't concern you. These kernels are included for several reasons, but probably the most important is their use by Slackware's installer - these are the kernels that the Slackware installation disks run. If you chose to let the installer configure your bootloader for you, it chooses to use these kernels due to the incredible variety of hardware they support. In contrast, the generic kernels support very little hardware without the use of external modules. If you want to use one of the generic kernels, you'll need to make use of something called an initrd, which is created using the mkinitrd(8) utility.

So why should you use a generic kernel? Currently the Slackware development team recommends use of a generic kernel for a variety of reasons. Perhaps the most obvious is size. The huge kernels are currently about twice the size of the generic kernels before they are uncompressed and loaded into memory. If you are running an older machine, or one with some small ammount of RAM, you will appreciate the savings the generic kernels offer you. Other reasons are somewhat more difficult to quantify. Conflicts between drivers included in the huge kernels do appear from time to time, and generally speaking, the huge kernels may not perform as well as the generic ones. Also, by using the generic kernels, special arguments can be passed to hardware drivers seperately, rather than requiring these options be passed on the kernel command line. Some of the tools included with Slackware work better if your kernel uses some drivers as modules rather than statically building them into the kernel. If you're having trouble understanding this, don't be alarmed: just think "huge kernel = good, generic kernel = better".

Unfortunately, using the generic kernels isn't as straightforward as using the huge kernels. In order for the generic kernel to boot your system, you must also include a few basic modules in an initird. Modules are pieces of compiled kernel code that can be inserted or removed from a running kernel (ideally using modprobe(8). This makes the system somewhat more flexible at the cost of a tiny bit of added complexity. You might find it easier to think of modules as device drivers, at least for this section. Typically you will need to add the module for whatever filesystem you chose to use for your root partition during the installer, and if your root partition is located on a SCSI disk or RAID controller, you'll need to add those modules as well. Finally, if you're using software RAID, disk encryption, or LVM, you'll also need to create an initrd regardless of whether you're using the generic kernel or not.

An initrd is a compressed cpio(1) archive, so creating one isn't very straightforward. Fortunately for you, Slackware includes a tool that makes this very easy: mkinitrd. A full discussion of mkinitrd is a bit beyond the scope of this book, but we'll show you all the highlights. For a more complete explanation, check the manpage or run mkinitrd with the [--help] argument.

darkstar:~#mkinitrd --helpmkinitrd creates an initial ramdisk (actually an initramfs cpio+gzip archive) used to load kernel modules that are needed to mount the root filesystem, or other modules that might be needed before the root filesystem is available. Other binaries may be added to the initrd, and the script is easy to modify. Be creative. :-) .... many more lines deleted ....

When using mkinitrd, you'll need to know a

few items of information: your root partition, your root filesystem,

any hard disk controllers you're using, and whether or not you're using

LVM, software RAID, or disk encryption. Unless you're using some kind

of SCSI controller (and have your root partition located on the SCSI

controller), you should only need to know your root filesystem and

partition type. Assuming you've booted into your Slackware installation

using the huge kernel, you can easily find this information with the

mount command or by viewing the contents of

/proc/mounts.

darkstar:~#mount/dev/sda1 on / type ext4 (rw,barrier=1,data=ordered) proc on /proc type proc (rw) sysfs on /sys type sysfs (rw) usbfs on /proc/bus/usb type usbfs (rw) /dev/sda2 on /home type jfs (rw) tmpfs on /dev/shm type tmpfs (rw)

In the example provided, you can see that the root partition is located

on /dev/sda1 and is an ext4 type partition. If we

want to create an initrd for this system, we simply need to tell this

information to mkinitrd.

darkstar:~#mkinitrd -f ext4 -r /dev/sda1

Note that in most cases, mkinitrd is smart enough to determine this information on its own, but it never hurts to specify it manually. Now that we've created our initrd, we simply need to tell LILO where to find it. We'll focus on that in the next section.

Looking up all those different options for

mkinitrd or worse, memorizing them, can be a

real pain though, especially if you try out different kernels

consistently. This became tedious for the Slackware development team,

so they came up with a simple configuration file,

mkinitrd.conf(5). You can find a sample file that

can be easily customized for your system at

/etc/mkinitrd.conf.sample directory. Here's mine.

darkstar:~# >/prompt>cat /etc/mkinitrd.conf.sample

# See "man mkinitrd.conf" for details on the syntax of this file

#

SOURCE_TREE="/boot/initrd-tree"

CLEAR_TREE="0"

OUTPUT_IMAGE="/boot/initrd.gz"

KERNEL_VERSION="$(uname -r)"

#KEYMAP="us"

MODULE_LIST="ext3:ext4:jfs"

#LUKSDEV="/dev/hda1"

ROOTDEV="/dev/sda1

ROOTFS="ext4"

#RESUMEDEV="/dev/hda2"

#RAID="0"

LVM="1"

#WAIT="1"

For a complete description of each of these lines and what they do,

you'll need to consult the man page for mkinitrd.conf.

Copy the sample file to to /etc/mkinitrd.conf and

edit it as desired. Once it is setup properly, you need only run

mkinitrd with the [-F] argument.

A proper initrd file will be constructed and installed for you without

you having to remember all those obscure arguments.

If you're unsure what options to specify in the configuration file or on the command-line, there is one final option. Slackware includes a nifty little utility that can tell what options are required for your currently running kernel /usr/share/mkinitrd/mkinitrd_command_generator.sh. When you run this script, it will generate a command line for mkinitrd that should work for your computer, but you may wish to check everything anyway.

darkstar:~#/usr/share/mkinitrd/mkinitrd_command_generator.shmkinitrd -c -k 2.6.33.4 -f ext3 -r /dev/sda3 -m \ usbhid:ehci-hcd:uhci-hcd:ext3 -o /boot/initrd.gz

LILO is the Linux Loader and is currently the default boot loader

installed with Slackware Linux. If you've used other Linux

distributions before, you may be more familiar with GRUB. If you prefer

to use GRUB instead, you can easily find it in the

extra/ directory on one of your Slackware CDs.

However, since LILO is the default Slackware bootloader, we'll focus

exclusively on it.

Configuring LILO can be a little daunting for new users, so Slackware comes with a special setup tool called liloconfig. Normally, liloconfig is first run by the installer, but you can run it at any time from a terminal.

liloconfig has two modes of operation:

simple and expert. The "simple" mode tries to automatically configure

lilo for you. If Slackware is the only operating system installed on

your computer, the "simple" mode will almost always do the right thing

quickly and easily. It is also very good at detecting Windows

installations and adding them to /etc/lilo.conf

so that you can choose which operating system to boot when you

turn your computer on.

In order to use "expert" mode, you'll need to know Slackware's root partition. You can also setup other linux operating systems if you know their root partitions, but this may not work as well as you expect. liloconfig will try to boot each linux operating system with Slackware's kernel, and this is probably not what you want. Fortunately, setting up Windows partitions in expert mode is trivial. One hint when using expert mode: you should almost always install LILO to the Master Boot Record (MBR). Once upon a time, it was recommended to install the boot loader onto the root partition and set that partition as bootable. Today, LILO has matured greatly and is safe to install on the MBR. In fact, you will encounter fewer problems if you do so.

liloconfig is a great way to quickly setup

your boot loader, but if you really need to know what's going on, you'll

need to look at LILO's configuration file:

lilo.conf(5) under the /etc

directory. /etc/lilo.conf is separated into

several sections. At the top, you'll find a "global" section where you

specify things like where to install LILO (generally the MBR), any

special images or screens to show on boot, and the timeout after which

LILO will boot the default operating system. Here's what the global

section of my lilo.conf file looks like in part.

# LILO configuration file boot = /dev/sda bitmap = /boot/slack.bmp bmp-colors = 255,0,255,0,255,0 bmp-table = 60,6,1,16 bmp-timer = 65,27,0,255 append=" vt.default_utf8=0" prompt timeout = 50 # VESA framebuffer console @ 1024x768x256 vga = 773 .... many more lines ommitted ....

For a complete listing of all the possible LILO options, you should

consult the man page for lilo.conf. We'll

briefly discuss the most common options in this document.

The first thing that should draw your attention is the "boot" line. This

determines where the bootloader is installed. In order to install to

the Master Boot Record (MBR) of your hard drive, you simply list the hard

drive's device entry on this line. In my case, I'm using a SATA hard drive

that shows up as a SCSI device /dev/sda. In order

to install to the boot block of a partition, you'll have to list the

partition's device entry. For example, if you are installing to the first

partition on the only SATA hard drive in your computer, you would probably

use /dev/sda1.

The "prompt" option simply tells LILO to ask (prompt) you for which

operating system to boot. Operating systems are each listed in their

own section deeper in the file. We'll get to them in a minute. The

timeout option tells LILO how long to wait (in tenths of seconds)

before booting the default OS. In my case, this is 5 seconds. Some

systems seem to take a very long time to display the boot screen, so

you may need to use a larger timeout value than I have set. This is in

part why the simple LILO installation method utilizes a very long

timeout (somewhere around 2 whole minutes). The append line in my case

was set up by liloconfig. You may (and

probably should) see something similar when looking at your own

/etc/lilo.conf. I won't go into the details of why

this line is needed, so you're just going to have to trust me that things

work better if it is present. :^)

Now that we've looked into the global section, let's take a look at the

operating systems section. Each linux operating system section begins

with an "image" line. Microsoft Windows operating systems are specified

with an "other" line. Let's take a look at a sample

/etc/lilo.conf that boots both Slackware and

Microsoft Windows.

# LILO configuration file ... global section ommitted .... # Linux bootable partition config begins image = /boot/vmlinuz-generic-2.6.29.4 root = /dev/sda1 initrd = /boot/initrd.gz label = Slackware64 read-only # Linux bootable partition config ends # Windows bootable partition config begins other = /dev/sda3 label = Windows table = /dev/sda # Windows bootable partition config ends

For Linux operating systems like Slackware, the image line specifies

which kernel to boot. In this case, we're booting

/boot/vmlinuz-generic-2.6.29.4. The remaining

sections are pretty self-explanatory. They tell LILO where to find the

root filesystem, what initrd (if any) to use, and to initially mount

the root filesystem read-only. That initrd line is very important for

anyone running a generic kernel or using LVM or software RAID. It

tells LILO (and the kernel) where to find the initrd you created using

mkinitrd.

Once you've gotten /etc/lilo.conf set up for your

machine, simply run lilo(8) to install it.

Unlike GRUB and other bootloaders, LILO requires you re-run

lilo anytime you make changes to its

configuration file, or else the new (changed) bootloader image will

not be installed, and those changes will not be reflected.

darkstar:~#liloWarning: LBA32 addressing assumed Added Slackware * Added Backup 6 warnings were issued.

Don't be too scared by many of the warnings you may see when running lilo. Unless you see a fatal error, things should be just fine. In particular, the LBA32 addressing warning is commonplace.

A bootloader (like LILO) is a very flexible thing, since it exists only to determine which hard drive, partition, or even a specific kernel on a partition to boot. This inherently suggests a choice when booting, so the idea of having more than one operating system on a computer comes very naturally to a LILO or GRUB user.

People "dual boot" for a number of reasons; some people want to have a stable Slackware install on one partition or drive and a development sandbox on another, other people might want to have Slackware on one and another Linux or BSD distribution on another, and still other people may have Slackware on one partition and a proprietary operating system (for work or for that one application that Linux simply cannot offer) on the other.

Dual booting should not be taken lightly, however, since it usually means that you'll now have two different operating systems attempting to manage the bootloader. If you dual boot, the likelihood of one OS over-writing or updating the bootloader entries without your direct intervention is great; if this happens, you'll have to modify GRUB or LILO manually so you can get into each OS.

There are two ways to dual (or multi) boot; you can put each operating system on its own hard drive (common on a desktop, with their luxury of having more than one drive bay) or each operating system on its own partition (common on a laptop where only one physical drive is present).

In order to set up a dual-boot system with each operating system on its own partition, you must first create partitions. This is easiest if done prior to installing the first operating system, in which case it's a simple case of pre-planning and carving up your hard drive as you feel necessary. See the section called “Partitioning” for information on using the fdisk or cfdisk partitioning applications.

Important

If you're dual booting two Linux distributions, it is inadvisable to attempt to share a /home directory between the systems. While it is technically possible, doing so will increase the chance of your personal configurations from becoming mauled by competing desktop environments or versions.

It is, however, safe to use a common swap partition.

You should partition your drive into at least three parts:

One partition for Slackware

One partition for the secondary OS

One partition for swap

First, install Slackware Linux onto the first partition of the hard drive as described in Chapter 2, Installation.

After Slackware has been installed, booted, and you've confirmed that everything works as expected, then reboot to the installer for the second OS. This OS will invariably offer to utilize the entire drive; you obviously do not want to do that, so constrain it to only the second partition. Furthermore, the OS will attempt to install a boot loader to the beginning of the hard drive, overwriting LILO.

You have a few possible courses of action with regards to the boot loader:

Possible Boot Loader Scenarios

- If the secondary OS is Linux, disallow it from installing a boot manager.

If you're dual booting to another Linux distribution, the installer of that distribution usually asks if you want a boot loader installed. You're certainly free to not install a boot manager for it at all, and manually manage both Slackware and the other distribution with LILO.

Depending on the distribution, you might be editing LILO more frequently than you would if you were only running Slackware; some distributions are notorious for frequent kernel updates, meaning that you'll need to edit LILO to reflect the new configuration after such an update. But if you didn't want to edit configuration files every now and again, you probably wouldn't have chosen Slackware.

- If the secondary OS is Linux, let it overwrite LILO with GRUB.

If you're dual booting to another Linux distribution, you are perfectly capable of just using GRUB rather than LILO, or install Slackware last and use LILO for both. Both LILO and GRUB have very good auto-detection features, so whichever one gets installed last should pick up the other distribution's presence and make an entry for it.

Since other distributions often attempt to auto-update their GRUB menus, there is always the chance that during an update something will become maligned and you suddenly find you can't boot into Slackware. If this happens, don't panic; just boot into the other Linux partition and manually edit GRUB so that it points to the correct partition, kernel, and initrd (if applicable) for Slackware in its menu.

- Allow the secondary OS to overwrite LILO and go back later to manually re-install and re-configure LILO.

This is not a bad choice, especially when Windows is the secondary OS, but potential pitfalls are that when Windows updates itself, it may attempt to overwrite the MBR (master boot record) again, and you'll have to re-install LILO manually again.

To re-establish LILO after another OS has erased it, you can boot from your Slackware install media and enter the setup stage. Do not re-partition your drive or re-install Slackware; skip immediately to the section called “Configure”.

Even when using the "simple" option to install, LILO should detect both operating systems and automatically configure a sensible menu for you. If it fails, then add the entries yourself.

Dual booting between different physical hard drives is often easier than with partitions since the computer's BIOS or EFI almost invariably has a boot device chooser that allows you to interrupt the boot process immediately after POST and choose what drive should get priority.

The snag key to enter the boot picker is different for each brand of motherboard; consult the motherboard's manual or read the splash screen to find out what your computer requires. Typical keys are F1, F12, DEL. For Apple computers, it is always the Option (Alt) key.

If you manage the boot priority via BIOS or EFI, then each boot loader on each hard drive is only aware of its own drive and will never interfere with one another. This is rather contrary to what a boot loader is designed to do but can be a useful workaround when dealing with proprietary operating systems which insist upon being the only OS on the system, to the detriment of the user's preference.

If you don't have the luxury of having multiple internal hard drives and don't feel comfortable juggling another partition and OS on your computer, you might also consider using a bootable USB thumbdrive or even a virtual machine to give you access to another OS. Both of these options is outside the scope of this book, but they've commonplace and might be the right choice for you, depending on your needs.

Table of Contents

So you've installed Slackware and you're staring at a terminal prompt, what now? Now would be a good time to learn about the basic command line tools. And since you're staring at a blinking curser, you probably need a little assistance in knowing how to get around, and that is what this chapter is all about.

Your Slackware Linux system comes with lots of built-in documentation

for nearly every installed application. Perhaps the most common

method of reading system documentation is

man(1). man

(short for manual) will bring up the included

man-page for any application, system call, configuration file, or

library you tell it too. For example, man man

will bring up the man-page for man itself.

Unfortunately, you may not always know what application you need to use for the task at hand. Thankfully, man has built-in search abilities. Using the [-k] switch will cause man to search for every man-page that matches your search terms.

The man-pages are organized into groups or sections by their content type. For example, section 1 is for user applications. man will search each section in order and display the first match it finds. Sometimes you will find that a man-page exists in more than one section for a given entry. In that case, you will need to specify the exact section to look in. In this book, all applications and a number of other things will have a number on their right-hand side in parenthesis. This number is the man page section where you will find information on that tool.

darkstar:~$man -k printfprintf (1) - format and print data printf (3) - formatted output conversiondarkstar:~$man 3 printf

Table 4.1. Man Page Sections

| Section | Contents |

|---|---|

| 1 | User Commands |

| 2 | System Calls |

| 3 | C Library Calls |

| 4 | Devices |

| 5 | File Formats / Protocols |

| 6 | Games |

| 7 | Conventions / Macro Packages |

| 8 | System Administration |

| 9 | Kernel API Descriptions |

| n | "New" - typically used to Tcl/Tk |

ls(1) is used to list files and directories,

their permissions, size, type, inode number, owner and group, and

plenty of additional information. For example, let's list what's in

the / directory for your new Slackware Linux system.

darkstar:~$ls /bin/ dev/ home/ lost+found/ mnt/ proc/ sbin/ sys/ usr/ boot/ etc/ lib/ media/ opt/ root/ srv/ tmp/ var/

Notice that each of the listings is a directory. These are easily distinguished from regular files due to the trailing /; standard files do not have a suffix. Additionally, executable files will have an asterisk suffix. But ls can do so much more. To get a view of the permissions of a file or directory, you'll need to do a "long list".

darkstar:~$ls -l /home/alan/Desktop-rw-r--r-- 1 alan users 15624161 2007-09-21 13:02 9780596510480.pdf -rw-r--r-- 1 alan users 3829534 2007-09-14 12:56 imgscan.zip drwxr-xr-x 3 alan root 168 2007-09-17 21:01 ipod_hack/ drwxr-xr-x 2 alan users 200 2007-12-03 22:11 libgpod/ drwxr-xr-x 2 alan users 136 2007-09-30 03:16 playground/

A long listing lets you view the permisions, user and group ownership, file size, last modified date, and of course, the file name itself. Notice that the first two entires are files, and the last three are directories. This is denoted by the very first character on the line. Regular files get a "-"; directories get a "d". There are several other file types with their own denominators. Symbolic links for example will have an "l".

Lastly, we'll show you how to list dot-files, or hidden files. Unlike other operating systems such as Microsoft Windows, there is no special property that differentiates "hidden" files from "unhidden" files. A hidden file simply begins with a dot. To display these files along with all the others, you just need to pass the [-a] argument to ls.

darkstar:~$ls -a.xine/ .xinitrc-backup .xscreensaver .xsession-errors SBo/ .xinitrc .xinitrc-xfce .xsession .xwmconfig/ Shared/

You also likely noticed that your files and directories appear in different colors. Many of the enhanced features of ls such as these colors or the trailing characters indicating file-type are special features of the ls program that are turned on by passing various arguments. As a convienience, Slackware sets up ls to use many of these optional arguments by default. These are controlled by the LS_OPTIONS and LS_COLORS environment variables. We will talk more about environment variables in chapter 5.

cd is the command used to change directories. Unlike most other commands, cd is actually not it's own program, but is a shell built-in. Basically, that means cd does not have its own man page. You'll have to check your shell's documentation for more details on the cd you may be using. For the most part though, they all behave the same.

darkstar:~$cd /darkstar:/$lsbin/ dev/ home/ lost+found/ mnt/ proc/ sbin/ sys/ usr/ boot/ etc/ lib/ media/ opt/ root/ srv/ tmp/ var/darkstar:/$cd /usr/localdarkstar:/usr/local$

Notice how the prompt changed when we changed directories? The default Slackware shell does this as a quick, easy way to see your current directory, but this is not actually a function of cd. If your shell doesn't operate in this way, you can easily get your current working directory with the pwd(1) command. (Most UNIX shells have configurable prompts that can be coaxed into providing this same functionality. In fact, this is another convience setup in the default shell for you by Slackware.)

darkstar:~$pwd/usr/local

While most applications can and will create their own files and directories, you'll often want to do this on your own. Thankfully, it's very easy using touch(1) and mkdir(1).

touch actually modifies the timestamp on a file, but if that file doesn't exist, it will be created.

darkstar:~/foo$ls -l-rw-r--r-- 1 alan users 0 2012-01-18 15:01 bar1darkstar:~/foo$touch bar2-rw-r--r-- 1 alan users 0 2012-01-18 15:01 bar1 -rw-r--r-- 1 alan users 0 2012-01-18 15:05 bar2darkstar:~/foo$touch bar1-rw-r--r-- 1 alan users 0 2012-01-18 15:05 bar1 -rw-r--r-- 1 alan users 0 2012-01-18 15:05 bar2

Note how bar2 was created in our second command,

and the third command simpl updated the timestamp on

bar1

mkdir is used for (obviously enough) making

directories. mkdir foo will create the

directory "foo" within the current working directory. Additionally,

you can use the [-p] argument to create any

missing parent directories.

darkstar:~$mkdir foodarkstar:~$mkdir /slack/foo/bar/mkdir: cannot create directory `/slack/foo/bar/': No such file or directorydarkstar:~$mkdir -p /slack/foo/bar/

In the latter case, mkdir will first create "/slack", then "/slack/foo", and finally "/slack/foo/bar". If you failed to use the [-p] argument, man would fail to create "/slack/foo/bar" unless the first two already existed, as you saw in the example.

Removing a file is as easy as creating one. The rm(1) command will remove a file (assuming of course that you have permission to do this). There are a few very common arguments to rm. The first is [-f] and is used to force the removal of a file that you may lack explicit permission to delete. The [-r] argument will remove directories and their contents recursively.

There is another tool to remove directories, the humble rmdir(1). rmdir will only remove directories that are empty, and complain noisely about those that contain files or sub-directories.

darkstar:~$lsfoo_1/ foo_2/darkstar:~$ls foo_1bar_1darkstar:~$rmdir foo_1rmdir: foo/: Directory not emptydarkstar:~$rm foo_1/bardarkstar:~$rmdir foo_1darkstar:~$ls foo_2bar_2/darkstar:~$rm -fr foo_2darkstar:~$ls

Everyone needs to package a lot of small files together for easy storage from time to time, or perhaps you need to compress very large files into a more manageable size? Maybe you want to do both of those together? Thankfully there are several tools to do just that.

You're probably familiar with .zip files. These are compressed files that contain other files and directories. While we don't normally use these files in the Linux world, they are still commonly used by other operating systems, so we occasionally have to deal with them.

In order to create a zip file, you'll (naturally) use the zip(1) command. You can compress either files or directories (or both) with zip, but you'll have to use the [-r] argument for recursive action in order to deal with directories.

darkstar:~$zip -r /tmp/home.zip /homedarkstar:~$zip /tmp/large_file.zip /tmp/large_file

The order of the arguments is very important. The first filename must be the zip file to create (if the .zip extension is ommitted, zip will add it for you) and the rest are files or directories to be added to the zip file.

Naturally, unzip(1) will decompress a zip archive file.

darkstar:~$unzip /tmp/home.zip

One of the oldest compression tools included in Slackware is gzip(1), a compression tool that is only capable or operating on a single file at a time. Whereas zip is both a compression and an archival tool, gzip is only capable of compression. At first glance this seems like a draw-back, but it is really a strength. The UNIX philosophy of making small tools that do their small jobs well allows them to be combined in myriad ways. In order to compress a file (or multiple files), simply pass them as arguments to gzip. Whenever gzip compresses a file, it adds a .gz extension and removes the original file.

darkstar:~$gzip /tmp/large_file

Decompressing is just as straight-forward with gunzip which will create a new uncompressed file and delete the old one.

darkstar:~$gunzip /tmp/large_file.gzdarkstar:~$ls /tmp/large_file*/tmp/large_file

But suppose we don't want to delete the old compressed file, we just want to read its contents or send them as input to another program? The zcat program will read the gzip file, decompress it in memory, and send the contents to the standard output (the terminal screen unless it is redirected, see the section called “Input and Output Redirection” for more details on output redirection).

darkstar:~$zcat /tmp/large_file.gzWed Aug 26 10:00:38 CDT 2009 Slackware 13.0 x86 is released as stable! Thanks to everyone who helped make this release possible -- see the RELEASE_NOTES for the credits. The ISOs are off to the replicator. This time it will be a 6 CD-ROM 32-bit set and a dual-sided 32-bit/64-bit x86/x86_64 DVD. We're taking pre-orders now at store.slackware.com. Please consider picking up a copy to help support the project. Once again, thanks to the entire Slackware community for all the help testing and fixing things and offering suggestions during this development cycle.

One alternative to gzip is the bzip2(1) compression utility which works in almost the exact same way. The advantage to bzip2 is that it boasts greater compression strength. Unfortunately, achieving that greater compression is a slow and CPU-intensive process, so bzip2 typicall takes much longer to run than other alternatives.

The latest compression utility added to Slackware is xz, which impliments the LZMA compression algorithm. This is faster than bzip2 and often compresses better as well. In fact, its blend of speed and compression strength caused it to replace gzip as the compression scheme of choice for Slackware. Unfortuantely, xz does not have a man page at the time of this writing, so to view available options, use the [--help] argument. Compressing files is accomplished with the [-z] argument, and decompression with [-d].

darkstar:~$xz -z /tmp/large_file

So great, we know how to compress files using all sorts of programs, but none of them can archive files in the way that zip does. That is until now. The Tape Archiver, or tar(1) is the most frequently used archival program in Slackware. Like other archival programs, tar generates a new file that contains other files and directories. It does not compress the generated file (often called a "tarball") by default; however, the version of tar included in Slackware supports a variety of compression schemes, including the ones mentioned above.

Invoking tar can be as easy or as complicated as you like. Typically, creating a tarball is done with the [-cvzf] arguments. Let's look at these in depth.

Table 4.2. tar Arguments

| Argument | Meaning |

|---|---|

| c | Create a tarball |

| x | Extract the contents of a tarball |

| t | Display the contents of a tarball |

| v | Be more verbose |

| z | Use gzip compression |

| j | Use bzip2 compression |

| J | Use LZMA compression |

| p | Preserve permissions |

tar requires a bit more precision than other applications in the order of its arguments. The [-f] argument must be present when reading or writing to a file for example, and the very next thing to follow must be the filename. Consider the following examples.

darkstar:~$tar -xvzf /tmp/tarball.tar.gzdarkstar:~$tar -xvfz /tmp/tarball.tar.gz

Above, the first example works as you would expect, but the second

fails because tar has been instructed to

open the z file rather than the expected

/tmp/tarball.tar.gz.

Now that we've got our arguments straightened out, lets look at a few examples of how to create and extract tarballs. As we've noted, the [-c] argument is used to create tarballs and [-x] extracts their contents. If we want to create or extract a compressed tarball though, we also have to specify the proper compression to use. Naturally, if we don't want to compress the tarball at all, we can leave these options out. The following command creates a new tarball using the gzip compression alogrithm. While it's not a strict requirement, it's also good practice to add the .tar extension to all tarballs as well as whatever extension is used by the compression algorithm.

darkstar:~$tar -czf /tmp/tarball.tar.gz /tmp/tarball/

Traditionally, UNIX and UNIX-like operating systems are filled with text files that at some point in time the system's users are going to want to read. Naturally, there are plenty of ways of reading these files, and we'll show you the most common ones.

In the early days, if you just wanted to see the contents of a file (any file, whether it was a text file or some binary program) you would use cat(1) to view them. cat is a very simple program, which takes one or more files, concatenates them (hence the name) and sends them to the standard output, which is usually your terminal screen. This was fine when the file was small and wouldn't scroll off the screen, but inadequate for larger files as it had no built-in way of moving within a document and reading it a paragraph at a time. Today, cat is still used quite extensively, but predominately in scripts or for joining two or more files into one.

darkstar:~$cat /etc/slackware-versionSlackware 14.0

Given the limitations of cat some very intelligent people sat down and began to work on an application to let them read documents one page at a time. Naturally, such applications began to be known as "pagers". One of the earliest of these was more(1), named because it would let you see "more" of the file whenever you wanted.

more will display the first few lines of a text file until your screen is full, then pause. Once you've read through that screen, you can proceed down one line by pressing ENTER, or an entire screen by pressing SPACE, or by a specified number of lines by typing a number and then the SPACE bar. more is also capable of searching through a text file for keywords; once you've displayed a file in more, press the / key and enter a keyword. Upon pressing ENTER, the text will scroll until it finds the next match.

This is clearly a big improvement over cat, but still suffers from some annoying flaws; more is not able to scroll back up through a piped file to allow you to read something you might have missed, the search function does not highlight its results, there is no horizontal scrolling, and so on. Clearly a better solution is possible.

Note

In fact, modern versions of more, such as the one shipped with Slackware, do feature a back function via the b key. However, the function is only available when opening files directly in more; not when a file is piped to more.

In order to address the short-comings of more, a new pager was developed and ironically dubbed less(1). less is a very powerful pager that supports all of the functions of more while adding lots of additional features. To begin with, less allows you to use your arrow keys to control movement within the document.

Due to its popularity, many Linux distributions have begun to

exclude more in favor of

less. Slackware includes both.

Moreover, Slackware also includes a handy little pre-processor for

less called

lesspipe.sh. This allows a user to exectute

less on a number of non-text files.

lesspipe.sh will generate text output from

running a command on these files, and display it in

less.

Less provides nearly as much functionality as one might expect from a text editor without actually being a text editor. Movement line-by-line can be done vi-style with j and k, or with the arrow keys, or ENTER. In the event that a file is too wide to fit on one screen, you can even scroll horizontally with the left and right arrow keys. The g key takes you to the top of the file, while G takes you to the end.

Searching is done as with more, by typing the / key and then your search string, but notice how the search results are highlighted for you, and typing n will take you to the next occurence of the result while N takes you to the previous occurrence.

Also as with more, files may be opened directly in less or piped to it:

darkstar:~$less /usr/doc/less-*/READMEdarkstar:~$cat /usr/doc/less*/README /usr/doc/util-linux*/README | less

There is much more to less; from within the application, type h for a full list of commands.

Links are a method of referring to one file by more than one name. By using the ln(1) application, a user can reference one file with more than one name. The two files are not carbon-copies of one another, but rather are the exact same file, just with a different name. To remove the file entirely, all of its names must be deleted. (This is actually the result of the way that rm and other tools like it work. Rather than remove the contents of the file, they simply remove the reference to the file, freeing that space to be re-used. ln will create a second reference or "link" to that file.)

darkstar:~$ln /etc/slackware-version foodarkstar:~$cat fooSlackware 14.0darkstar:~$ls -l /etc/slackware-version foo-rw-r--r-- 1 root root 17 2007-06-10 02:23 /etc/slackware-version -rw-r--r-- 1 root root 17 2007-06-10 02:23 foo

Another type of link exists, the symlink. Symlinks, rather than being another reference to the same file, are actually a special kind of file in their own right. These symlinks point to another file or directory. The primary advantage of symlinks is that they can refer to directories as well as files, and they can span multiple filesystems. These are created with the [-s] argument.

darkstar:~$ln -s /etc/slackware-version foodarkstar:~$cat fooSlackware 140darkstar:~$ls -l /etc/slackware-version foo-rw-r--r-- 1 root root 17 2007-06-10 02:23 /etc/slackware-version lrwxrwxrwx 1 root root 22 2008-01-25 04:16 foo -> /etc/slackware-version

When using symlinks, remember that if the original file is deleted, your symlink is useless; it simply points at a file that doesn't exist anymore.

Table of Contents

Yeah, what exactly is a shell? Well, a shell is basically a command-line user environment. In essence, it is an application that runs when the user logs in and allows him to run additional applications. In some ways it is very similar to a graphical user interface, in that it provides a framework for executing commands and launching programs. There are many shells included with a full install of Slackware, but in this book we're only going to discuss bash(1), the Bourne Again Shell. Advanced users might want to consider using the powerful zsh(1), and users familiar with older UNIX systems might appreciate ksh. The truly masochistic might choose the csh, but new users should stick to bash.

All shells make certain tasks easier for the user by keeping track of

things in environment variables. An environment variable is simply a

shorter name for some bit of information that the user wishes to store

and make use of later. For example, the environment variable PS1 tells

bash how to format its prompt. Other

variables may tell applications how to run. For example, the LESSOPEN

variable tells less to run that handy

lesspipe.sh preprocessor we talked about, and

LS_OPTIONS tuns on color for ls.

Setting your own envirtonment variables is easy. bash includes two built-in functions for handling this: set and export. Additionally, an environment variable can be removed by using unset. (Don't panic if you accidently unset an environment variable and don't know what it would do. You can reset all the default variables by logging out of your terminal and logging back in.) You can reference a variable by placing a dollar sign ($) in front of it.

darkstar:~$set FOO=bardarkstar:~$echo $FOObar

The primary difference between set and export is that export will (naturally) export the variable to any sub-shells. (A sub-shell is simply another shell running inside a parent shell.) You can easily see this behavior when working with the PS1 variable that controls the bash prompt.

darkstar:~$set PS1='FOO 'darkstar:~$export PS1='FOO 'FOO

There are many important environment variables that

bash and other shells use, but one of the

most important ones you will run across is PATH. PATH is simply a list

of directories to search through for applications. For example,

top(1) is located at

/usr/bin/top. You could run it simply by

specifying the complete path to it, but if

/usr/bin is in your PATH variable,

bash will check there if you don't specify a

complete path one your own. You will most likely first notice this

when you attempt to run a program that is not in your PATH as a normal

user, for instance, ifconfig(8).

darkstar:~$ifconfigbash: ifconfig: command not founddarkstar:~$echo $PATH/usr/local/bin:/usr/bin:/bin:/usr/X11R6/bin:/usr/games:/opt/www/htdig/bin:.

Above, you see a typical PATH for a mortal user. You can change it on your own the same as any other environment variable. If you login as root however, you'll see that root has a different PATH.

darkstar:~$su -Password:darkstar:~#echo $PATH/usr/local/sbin:/usr/sbin:/sbin:/usr/local/bin:/usr/bin:/bin:/usr/X11R6/bin:/usr/games:/opt/www/htdig/bin

Wildcards are special characters that tell the shell to match certain criteria. If you have experience with DOS, you'll recognize * as a wildcard that matches anything. bash makes use of this wildcard and several others to enable you to easily define exactly what you want to do.

This first and most common of these is, of course, *. The asterisk

matches any character or combination of characters, including none.

Thus b* would match any files named b, ba, bab,

babc, bcdb, and so forth. Slightly less common is the ?. This

wildcard matches one instance of any character, so

b? would match ba and bb, but not b or bab.

darkstar:~$touch b ba babdarkstar:~$ls *b ba babdarkstar:~$ls b?ba

No, the fun doesn't stop there! In addition to these two we also have the bracket pair "[ ]" which allows us to fine tune exactly what we want to match. Whenever bash see the bracket pair, it substitutes the contents of the bracket. Any combination of letters or numbers may be specified in the bracket as long as they are comma seperated. Additionally, ranges of numbers and letters may be specified as well. This is probably best shown by example.

darkstar:~$ls a[1-4,9]a1 a2 a3 a4 a9